Voice communication is the lifeline of air traffic control. When a controller clears a pilot for approach or issues a go-around instruction, there is no margin for ambiguity, and certainly no room for phantom noise, dropped packets, or degraded audio quality.

A European Air Navigation Service Provider (ANSP) set out to modernize its voice communication infrastructure by migrating to an IP-based system. What followed was an unexpectedly deep technical investigation. One that ultimately uncovered not one problem, but four separate issues hiding in their network and radio environment. This article walks through each of them, explains what was found and how, and draws out the broader lessons for ANSPs considering or managing similar upgrades.

The upgrade that opened a can of worms

When the ANSP switched from analog to IP-based digital radios, the expectation was a straightforward capability improvement. What they didn’t anticipate was how much more sensitive the new equipment would be, and how that sensitivity would expose pre-existing interference sources that the old analog system had simply absorbed in silence.

Almost immediately after the cutover, controllers began hearing strange “clacking” sounds through their headsets. Engineering teams were dispatched. A payment dispute broke out between the ANSP and the equipment vendor. And a systematic investigation began. One that would eventually identify three distinct interference sources and a separate network problem.

Issue #1: Ghost calls are flooding the system

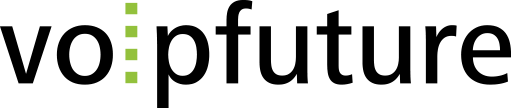

The first symptom was what engineers called “ghost calls”. Extremely short squelch events, each lasting under 300 milliseconds. In radio communications, squelch opens a channel when the receiver detects a signal above a set threshold. These ghost activations weren’t carrying actual voice traffic; they were noise triggers masquerading as transmissions.

The scale of the problem was striking. At some frequencies, the system was logging up to 450 squelch events per minute, with a baseline of around 50 per channel per minute. The digital radios, operating in IP mode, were fast enough and sensitive enough to catch every one of them. In analog mode, those same events had gone unregistered entirely.

The ANSP initially blamed the new equipment, arguing that nothing like this had occurred before the upgrade. Technically, that was true, but not because the interference was new. Careful field analysis eventually traced the source to a defective third-party system installed near the ATC receiving antennas, which was generating electromagnetic interference (EMI) strong enough to trigger the radios’ squelch. The interference had existed before the upgrade; the new radios were simply the first system sensitive enough to detect and surface it.

The downstream effect was significant. The ANSP’s voice communication system (VCS) wasn’t designed to handle that volume of short-burst events, leaving controllers with a persistent, distracting noise environment during operations.

Issue #2: Interference with a pattern

The second interference type behaved differently, and in some ways, more suspiciously. Rather than appearing randomly, these events followed a strict rhythm: some occurring every 70–85 seconds, others at precise 5-minute intervals. Each event generated exactly two audio packets, each 20 milliseconds, relayed from the radio to the VCS.

That kind of regularity rules out random EMI. It points to something systematic. Investigation using a passive monitoring system identified the source: distant external systems emitting sweep signals across the entire ATC frequency band. In one documented case, two sweep signals separated by 5 MHz were triggering two short squelch events within 10 seconds of each other, a pattern that was only visible once the monitoring system began correlating frequency-level events over time.

Unlike the EMI from the defective nearby system, these external sources couldn’t simply be switched off. Instead, they are now continuously tracked to ensure their impact remains within acceptable operational limits.

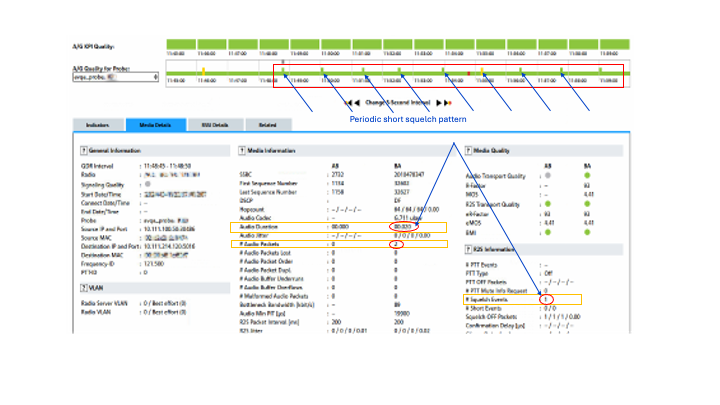

Issue #3: A rising noise floor

The third interference issue was less dramatic in appearance but equally serious in effect: periodic spikes in background noise levels across the frequency band. Monitoring revealed recurring power peaks that could cause receiver desensitization, rendering the radio less capable of receiving legitimate transmissions during those windows.

In ATC communications, where a controller might need to hear a pilot transmission at a critical moment, any degradation of receiver sensitivity is a genuine safety concern. This type of interference is also the hardest to diagnose without continuous monitoring, because the peaks are intermittent and wouldn’t show up in spot-check testing.

How cross-coupling turned small problems into system-wide ones

Any one of these interference issues, in isolation, might have been manageable. What made the situation significantly worse was a standard ATC practice called cross-coupling. The ability for controllers to transmit simultaneously across multiple frequencies. It’s a legitimate and useful operational feature. But in this case, it meant that a single interference event on one frequency was automatically replicated across all coupled channels, multiplying its impact.

The voice recording system, which captures all transmissions for safety review and incident investigation, was particularly hard hit. Designed to handle normal operational call volumes, it wasn’t provisioned for the flood of short phantom transmissions being replicated across the entire system. The recording infrastructure had to be expanded to cope with the load.

A side finding that turned out to matter: network quality issues

While the interference investigation was underway, the monitoring tool surfaced something entirely unrelated to the radio problems. The backbone network itself was showing signs of strain.

The key quality metric for ATC voice communications is the Mean Opinion Score (MOS), as defined by ED136. ED136 requires that voice transmissions consistently achieve a MOS of 4.0 or higher. Analysis of network traffic revealed deviations from this threshold, driven by packet loss and, more strikingly, extreme jitter exceeding 398 milliseconds.

To put that in context: for an ANSP backbone network, which is purpose-built rather than a general-purpose enterprise LAN, jitter values in that range are highly abnormal. Jitter at that scale creates perceptible gaps in voice transmission, potentially affecting how clearly a pilot hears instructions or how accurately a controller can monitor a situation. Unlike the radio interference issues, which affected specific frequencies, these network problems were impacting all radios across multiple sites simultaneously.

This finding wasn’t on anyone’s radar when the investigation started. It surfaced as a byproduct of comprehensive monitoring, and might have gone undetected until it caused a more serious operational incident.

Why standard monitoring tools weren’t enough

Resolving all four issues required a monitoring approach that conventional tools weren’t designed to deliver. A monitoring system that operates passively captures and analyzes voice communications in real time without touching the operational infrastructure. More importantly, it doesn’t rely on averaged metrics. Moreover, it applies fixed time-slice analysis, examining call quality in 5-second segments. That granularity is what made it possible to identify the 40-millisecond ghost call events and the 398-millisecond jitter spikes, both of which would have been smoothed out and made invisible by averaged monitoring data.

The ability to simultaneously track voice quality and network performance across the same timeline was also critical. It allowed engineers to distinguish between interference symptoms and root causes, and to understand how the various issues were interacting with each other, particularly the cross-coupling multiplication effect.

What this case teaches us

A few takeaways stand out from this investigation:

- Infrastructure upgrades don’t always introduce new problems. Sometimes they just make existing ones visible for the first time. The ANSP’s new IP-based radios weren’t defective; they were simply doing their job more thoroughly than the analog equipment they replaced.

- Problems in ATC systems rarely exist in isolation. A single interference source can cascade through cross-coupled channels, overload recording infrastructure, and mask other underlying issues simultaneously. Understanding the interaction between components matters as much as identifying individual fault points.

- Comprehensive and continuous monitoring is what makes complex, multi-root-cause problems solvable. Point-in-time diagnostics or averaged metrics aren’t sufficient for environments where a 40-millisecond event or a brief jitter spike can have real operational consequences.

As aviation communication systems continue to evolve, moving toward more IP-based, software-defined infrastructure, the ability to monitor them with this level of precision will only become more important. This case is a reminder that clear, reliable communication in ATC isn’t just a technical requirement. It’s an operational imperative.